BCP with BDR (SqlBulkCopy + IDataReader = BulkDataReader)

Anthony Bloesch's Web Log - Bulk loading data with IDataReader and SqlBulkCopy

"Often large amounts of data need to be quickly loaded into a database. A common approach is to fill a DataTable and use the SqlBulkCopy class to load the data. The problem with this approach is that the data set must be materialized in memory. This is inefficient because the data must be copied and transformed several times and the cache utilization is poor. As well it does not scale because memory is usually a limited resource. But it is far faster than one row at a time inserts.

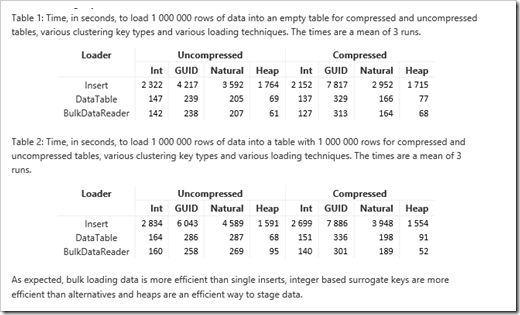

An alternative is to implement an IDataReader and use the SqlBulkCopy class to load the data but the implementation requirements are poorly documented. I have developed the BulkDataReader class that makes the implementation straightforward. With the class, it is possible to stream the data from one source into a database. Since the data has fewer transformations and is streamed from buffer to buffer (and thus utilizes caches better) the performance and resource utilization is much better than other approaches. In practice, the limiting factor with this approach is how fast the data can be read from the data source. In early releases of the .Net Framework, the DataTable approach was much slower than the IDataReader approach but DataTables are now much more efficient and the approaches have comparable performance when memory is not a constraint.

Bulk loading data is much faster that loading data with single inserts because the repeated overhead of transferring the data, parsing the insert statement, running the statement and issuing a transaction record is avoided. Instead, a more efficient path is used into the storage engine to stream the data. The setup cost of this path is however much higher than a single insert statement. The break-even point is typically around 10 to 100 rows. Once the data exceeds this size, bulk loading is almost always more efficient.

...

BulkDataReader

The download has all the code and test code for the BulkDataReader class. To implement an IDataReader using the BulkDataReader, do the following:

· Override the SchemaName and TableName properties with meaningful names. These need not match the target schema and table names and are useful for debugging.

· Override the AddSchemaTableRows method to declare the schema of the source information. In general, this should match the target schema but some remapping is possible.

· Override the GetValue method to return the value of a column for the current row.

· Override the Read method to advance to the next row.

· If the subclass contains disposable resources, override the dispose method

...

..."

This is SqlBulkCopy is something I've started using recently and I think I've been using the DataTable approach. Now, no more of that!

No comments:

Post a Comment